This page gives a brief history of my research interests and current focus. Also see my list of projects for more details.

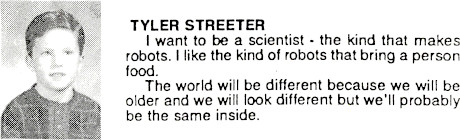

I have always been interested in robots and AI, at least to the extent that a kid can be interested in these things (see Fig. 1). But it wasn't until I was working on an engineering degree that I began to imagine what was really possible.

In 2003, as an undergraduate student, I wanted to build a 3D simulated human that could learn from scratch how to control its own body. A few months later I had learned enough C++, OpenGL, ODE, neural networks, and genetic algorithms to make this happen. Several days of simulated learning produced virtual people that could stand, jump, and walk.

It was thrilling to watch simulated life forms progress incrementally from initial random flailings to their final fine-tuned, purposeful movements. The experience sparked my interest in research, and from that point forward, my drive to study, simulate, and experiment only grew. I was hooked. I had to understand why this particular method worked, where it failed, and what might work better. Would this approach extend to harder tasks? If not, why not? How do humans and animals learn these things? How does the brain learn, and how might it be emulated in software? What is the ultimate learning algorithm?

Since that early experience, I have spent years developing the knowledge and skills needed to answer these questions. I have studied a range of machine learning models, algorithms, and perspectives, including reinforcement learning, neuroscience, data compression, Bayesian statistics, and information theory.

I wrote a software library to simplify 3D physics simulations, another library for general purpose RL, and combined the two for a range of embodied AI experiments. I studied artificial curiosity and made a simulated playground for open-ended learning experiments. I built interactive visualization tools for debugging and gaining intuition about various learning methods. I released open source code to simplify software testing, profiling, and parallelization. I researched brain models and designed a brain-inspired AGI architecture. I started a company to fund my research.

I learned more math, probability, and statistics to explore machine learning at a deeper level. I saw how simple, powerful principles can subsume hosts of heuristic methods, leading to new perspectives and more effective learning algorithms. Thus, as I have gradually gained understanding, my work has become more theoretical.

All of this effort has been in pursuit of simple, general models and learning principles to enable more reliable algorithms, more useful applications, and ultimately to bring us closer to human-like intelligent systems.

Throughout my journey, I have maintained my initial fascination with seeing artificial systems learn from scratch to solve difficult problems. Furthermore, I have come to believe that any problem that can be solved with human intelligence would benefit from better AI. The enormous potential of this field will surely help us tackle society's most pressing challenges.

To maximize my positive impact on society, I work toward simple, general machine learning principles, models, and algorithms which lead to useful applications and a foundation for safe, effective, human-level AI. In short, I want a general purpose software "brain." This is now my primary research agenda, which has gradually taken shape over the years.

This common thread can be seen running through much of my past work, especially in the following sequence of projects:

Specifically, I am driven to discover, through theory and experiment, machine learning methods that:

My ideal methods would effectively represent and learn structure from any environment encountered by the mammalian brain, thus enabling immediate practical applications among all common data domains. These include text, audio, images, video, stocks, 3D geometry, card and board games, video games, and robotics.

These high-level goals can be divided into two parts: modeling arbitrary high-dimensional joint distributions, and goal-directed action selection.