Verve is a software library for reinforcement learning that I developed as part of my master's thesis. Its core object is an intelligent agent with user-defined inputs and outputs, including arbitrary reward signals to guide the agent's behavior. (The word "verve" means vitality, liveliness, aptitude, or talent.)

For all the details, see my master's thesis.

Reinforcement learning (RL) is a branch of machine learning involving sequential decision making with sparse feedback. It is generally useful in situations where a well-defined control algorithm is unknown but may be learned from direct sensory-motor experience. Simply by providing the agent with a scalar reinforcement signal as it explores its environment, it will optimize its behavior to achieve more rewards, thus learning the desired control policy. RL provides a fairly general way to build systems that learn to control things. For example, they could learn to control robots, drive vehicles, or direct non-player characters (NPCs) in video games.

Note that RL is not always the right approach. For example, if rich feedback is available in the form of complete sensory-motor data samples, a better approach is pure supervised learning. RL is more appropriate when the desired action for any given input is unknown.

Verve implements several well-studied RL methods (radial basis functions, single-layer feedforward neural networks, temporal difference learning, and planning) with a few experimental methods (artificial curiosity and internal uncertainty estimation) into a novel learning architecture. Verve's design principles are 1) general purpose (applicable to a wide range of problems), and 2) easy to use (small, intuitive API). It is intended for both discrete environments with discrete time steps and real-time control in continuous environments (e.g. video games and robotics).

This code shows how to create an Agent and set up a loop to update the Agent's Observation, reward, and action. Note that several function calls here are user-defined (initEnvironment, computeContinuousInput0, computeReward, performAction2, and updateEnvironment).

// Define an AgentDescriptor.

verve::AgentDescriptor agentDesc;

agentDesc.addDiscreteSensor(4); // 4 possible values.

agentDesc.addContinuousSensor();

agentDesc.addContinuousSensor();

agentDesc.setContinuousSensorResolution(10);

agentDesc.setNumOutputs(3); // 3 actions.

// Create the Agent and Observation.

verve::Agent agent(agentDesc);

verve::Observation obs;

obs.init(agent);

initEnvironment();

// Loop forever (or until desired learning performance).

while (1)

{

verve::real dt = 0.1;

// Update the Observation based on the current state

// of the world. Each sensor is accessed via an index.

obs.setDiscreteValue(0, computeDiscreteInput());

obs.setContinuousValue(0, computeContinuousInput0());

obs.setContinuousValue(1, computeContinuousInput1());

verve::real reward = computeReward();

// Update the Agent.

unsigned int action = agent.update(reward, obs, dt);

// Apply the chosen action to the environment.

switch(action)

{

case 0:

performAction0();

break;

case 1:

performAction1();

break;

case 2:

performAction2();

break;

default:

break;

}

// Simulate the environment ahead by dt seconds.

updateEnvironment(dt);

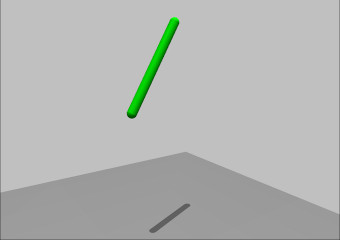

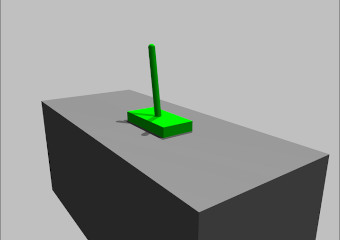

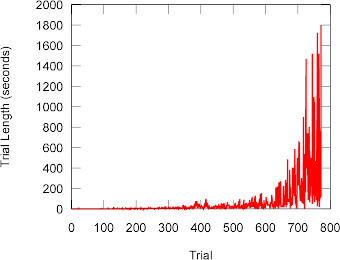

}The pendulum swing-up task is a standard RL problem that involves controlling a simple physical system. A pendulum suspended in midair must swing itself up to its highest position to receive a reward, but it is not physically possible to achieve this state without first learning to swing back and forth to build momentum.

For my thesis, I implemented this environment using OPAL to simulate physics and Ogre to render the graphics. This task and the inverted pendulum below helped verify that Verve agents can learn to solve standard control problems.

The pendulum swing-up test program, along with the inverted pendulum and curious robot playground described below, are available for download (Win32 only).

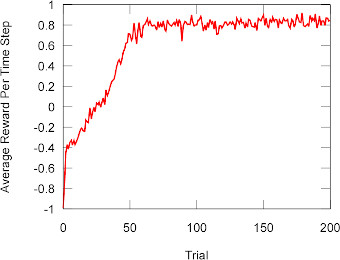

Another standard but slightly more difficult control task is the inverted pendulum (aka cart-pole) problem. Here a pole (pendulum) is mounted upside-down on a cart. The cart can move left and right only, and the pole can rotate around just one axis. The agent controls the cart but not the pole, and the agent receives a reward on each time step where the pole has not fallen to either side. Thus, the agent must learn to move the cart back and forth in order to keep the pole balanced vertically.

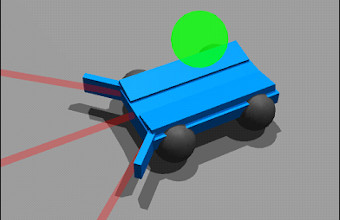

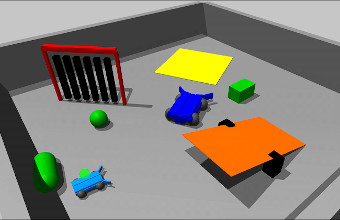

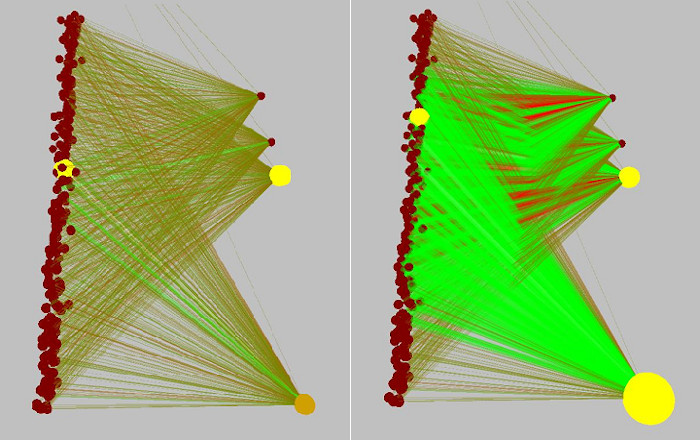

For much of my thesis, I focused on artificial curiosity combined with RL. After my thesis work, I made this simulated playground for curious robot exploration.